Illustration: Daniel Zender

Music implementation is the backbone to any interactive video game soundtrack.

In an interactive experience, music needs to adapt to a variety of gameplay parameters: player movement, player location, combat state, enemy state – the list can go on. The actions that a music system adapts to vary widely based on the type of game. For example, music states in a first-person shooter can differ widely from the music states in a platformer. However, at the core, there are fundamental systematic abstractions that can be applied when building an interactive music system for the context of any type of game.

Some initial thoughts on interactive music systems

Something to keep in mind when building an interactive music system for a game is that there’s no magic bullet to creating the system. Just because a particular strategy worked really well for one game, it doesn’t mean that it’ll be right for your game in the same exact way. That being said, basic principles derived from one game mechanic can easily be translated to another. Learning transfer is one of the most important things to keep in mind when doing video game music research.

Additionally, “more complex” doesn’t always equal “better.” It’s important to retain a balance of interactivity that’s relative to what the player sees on-screen. What would they be expecting to hear at any given moment? No matter what, the score should always be in service to the gameplay; the gameplay is never a showcase for the score.

What’s a game engine?

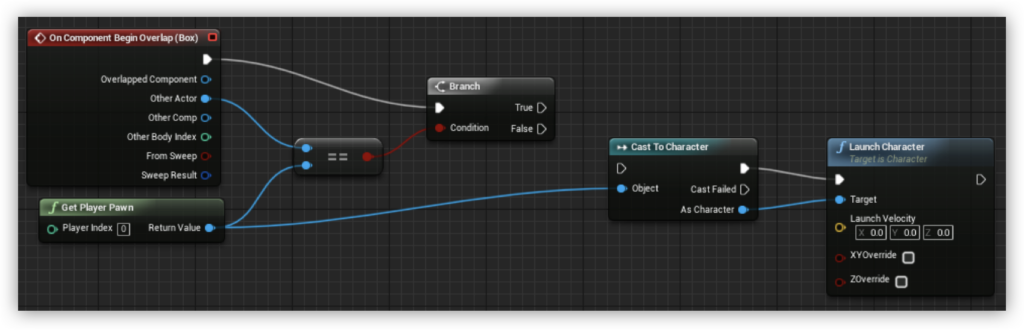

Video games are built within software known as game engines. A game engine is a technical environment that provides developers a set of tools to create all sorts of different assets for their game, as well as control over the behavior of those assets. So, the first question to answer is: What engine are you using? Or more generally, what’s the technical toolset that you have available? Unity and Unreal (two commercially-available and widely-used game engines) both have different out-of-the-box features that’ll give you different possibilities. For instance, audio behavior in Unreal is scripted using a visual scripting language called BluePrint (you can get started with learning BluePrint in Unreal using these tutorials.)

On the other hand, audio behavior in Unity is scripted primarily using C#, a text-based scripting language (you can get started in learning C# in Unity with tutorials located here).

Both game engines have a separate suite of tools created specifically for game audio design, and each has its own benefits. In the end, you’ll need to adapt to the audio toolset that resides within the engine your game is being built in, unless you’re using additional software known as audio middleware.

What’s audio middleware?

Audio middleware refers to software that sits between your game engine and your audio engine. Middleware is responsible for controlling the behavior of audio in your game, and can be used with most commercially-available game engines, after some initial setup. When using middleware, you can script highly-complex audio and interactive music behaviors that would often require bespoke technical solutions when using game engines alone. FMOD and Wwise are two pieces of middleware software that are widely used across the industry.

FMOD uses a timeline-first structure for audio design, with a similar interface to a digital audio workstation. This tutorial provides an excellent starting point for scripting interactive music behavior in FMOD.

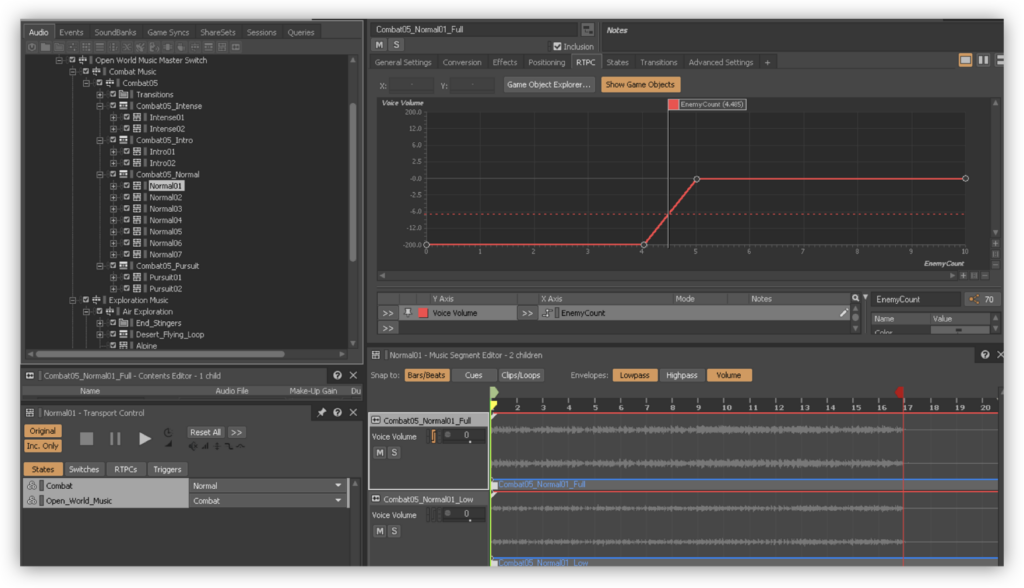

Wwise, on the other hand, uses a tree-driven structure to denote behaviors of different sounds. This tutorial provides a great starting point for scripting interactive music behavior in Wwise.

With any middleware you decide to use, you’ll naturally be able to create a more cohesive system with less of a necessity for custom technical toolsets. Though the methodology will be different, there isn’t much limitation to what you can create in either middleware. Ultimately, your choice boils down to preferred interface and technical / budgeting limitations.

How a game’s platform impacts audio

When working with game audio assets, shorter sound effects such as footsteps are usually loaded into RAM (Random Access Memory), while longer sound effects such as music will be streamed to disk. When streaming an audio file, chunks of the file are loaded into memory as needed without needing to load the entire file into RAM all at once.

With this in mind, the platform that your game is releasing on will impact the fidelity of your music, especially when comparing the processing power of a mobile device to a console such as the Xbox One or Playstation 4. Your release platform will dictate your RAM budget and how much streaming overhead you have to work with, which will impact the amount of music you can stream simultaneously. In other words, these variables will directly inform the level of complexity that’s feasible in your music.

The importance of prioritization

Another important thing to do before diving into music scripting is identifying the features that are most important to your game. The core gameplay, main mechanics, and narrative pillars are all great considerations. Each of these will inform the most important interactions between the gameplay and music transition, and are the places that are most valuable to dedicate technical resources. Once these high-priority interactions are established, other behaviors can start to fall into place and be planned according to technical budget.

Actually getting started with building interactive music systems

With all of this in mind, where does one actually begin? Once you have an understanding of the fundamental constituents that the game is being built on, it’s important to build an outline of some initial intended states so that you have some direction when you finally begin prototyping. If you’re working in a new and unfamiliar technical environment, it would also be helpful to figure out how you’d like these music states to initially interact. From there, you can determine if those interactions are feasible in your tech right out-of-the-box, or if extra technical support will be required.

Making some order out of the task ahead of you is essential because especially as a composer working on independent games, you may be tasked with anything from composing music to mixing it, exporting it, building the system that it needs to be exported to, and sometimes scripting the in-game music interactions, as well as managing the performance of music streams. That’s a pretty big list of responsibilities. You want to be sure that whatever you decide to do with your system highlights your strengths as a music composer and implementer, in relation to the core mechanics of the game. This goes back to the idea that more complex is not always more interesting.

However, not all projects are the same, especially when it comes to working on larger games with more of a budget. Sometimes, when jumping on a project as a composer, the system will already be built and the development team will only be looking for music. As a music composer, this could provide an excellent starting point because your limitations and possibilities will already be defined. Where you begin can also vary depending on whether the system is being built to accommodate existing music, music is being composed to fit into a pre-built system, or the gameplay system and music are being developed in parallel. There isn’t one ideal method; like any creative endeavor, a lot of variables come into play such as personality types and production timelines.

Establishing initial music states

One of the first questions you should ask yourself when establishing initial gamestates is: What is the default music state of the game? Or, more technically, how should the music respond when the player is providing zero input? The answers to these questions will establish the behavior of background music in your game. Background music is what will set the game’s initial tone and ambience, so it’s important to consider how the player will be interacting with this type of music.

Often times, background music will be the vehicle that sets the tone for certain locations. Dealing with location-based music is a challenge often found in — but not necessarily exclusive to — open-world games. Different scenarios of location-based gameplay will inform how explicitly you want to delineate the borders between territories.

Some games, such as World of Warcraft, take the approach of immediately transitioning music when the player crosses location borders, and this works very well when dealing with location-based map music, for instance. On the other hand, when crossing a location border in Just Cause 4, the music will wait for an exit transition to occur (or for the current music to end after crossing a location border) before transitioning to the next track. Conversely, with the music of any Pokémon game, the score will immediately change when entering a new town or location.

Expanding music states with vertical remixing and horizontal re-sequencing

After establishing the baseline level of background music, it’s best to figure out the changes, if any, that need to be made to music implementation to support other gameplay states. It’s important to determine what an “action” scenario looks like in your game. Not all games are violent, so the peak of action might occur during narrative moments, such as suspenseful conversations. And with that being said, it’s important to also determine how far music actually needs to travel from the default state to complement the action scenario.

In video game music, there are two very popular tactics that are often used to modulate music. Vertical remixing is a popular technique that focuses on dynamically changing a piece’s instrumentation by weaving in different musical layers based on gameplay variables. On the other hand, horizontal re-sequencing focuses on the compositional or “horizontal” arrangement of a piece, changing its musical content or structural order based on variables. In the case of horizontal re-sequencing, longer pieces of music are often divided into smaller segments for the purpose of shuffling.

A combination of the above techniques could also work for your game. For example, in Just Cause 4, flight exploration music relies heavily on the vertical remixing technique. Different layers of instrumentation fade in based on whether the player is interacting with their wingsuit or parachute, whether they’re in a dangerous area or near the ground, etc.

Conversely, combat music in Just Cause 4 uses a blend of both vertical remixing and horizontal re-sequencing. Different instrumentation layers fade in and out as the enemy count increases and decreases. Horizontally, different segments of music are organized into categories based on intensity. At a sustained intensity of combat, different sections of music from the same ‘intensity category’ are shuffled with each other. This helps maintain a thematic level of intensity without fatiguing the listener, and also without having the music feel like a loop. When the intensity of the combat drastically changes, segments of music from different intensity categories are then added to the sequence.

Bringing your soundtrack together

We’ve now established a wide spectrum of intensities for your game, spanning background music to high-intensity action. Of course, there will be nuances to account for that affect the characteristics of the intensity spectrum such as different action scenarios, classifications of missions / quests, environments, etc. That said, with a robust baseline established, your interactive music techniques can be inherited by and expanded to other aspects of your game. As players progress through the game, these modes of interactivity gradually start to become musical motifs in and of themselves. This creates a familiarity of musical change across the broad scope of the experience, signifying to the player how their gameplay choices are impacting their experience – both at a high level and moment-to-moment.

Explore royalty-free sounds from leading artists, producers, and sound designers:

February 11, 2020

.svg)

.svg)