Illustration: George Wylesol

Video game audio is in a creative league of its own, especially when compared to audio in linear mediums such as film.

When working on a film, a sound designer can know and understand the exact sound that’s needed for a precise moment. When a car whooshes past the screen, a designer can hand-craft every engine rev, gear shift, and tire spin. They can then, subsequently, place where each element will lie in the mix and have confidence that the audience will experience this scene the same way every time they watch the film.

Game audio is different. When a player is engaging with a nearby on-screen vehicle in a video game, the auditory experience is totally reliant on the behaviors of the player and the game world. The way the player hears a single vehicle can vary based on a number of things: the player’s orientation towards the vehicle, their distance from it, the speed of the vehicle, the number of activities in the world around the player – the list could continue on. The experience of a video game is controlled by the player. And with each player, that experience could be different.

When a sound designer is tasked with creating an auditory experience for a game, there are tons of variables that they need to keep in mind during the creative process. Therefore, when creating an auditory experience, a sound designer is really creating a systemic series of sounds which is based off of a variety of questions that usually begins with, “How will the sound behave if the player does ___?” This behavior can’t be accounted for solely through the use of design capabilities in a digital audio workstation. As such, when designing sounds for a video game, additional tools must be employed to account for such behavior and modulate the sound accordingly. That behavior is dictated through the use of a game engine.

Utilizing game engines and game data

Video games are built within software that are known as game engines. This is a technical environment that provides developers a certain set of tools to create all sorts of different assets for their game, as well as control over the behavior of those assets. A game engine handles all tasks in the virtual world, spanning physics, character movements, animations, rendering, visual effects, audio, and more.

Sound events

Most importantly, for sound designers, the game engine keeps track of when to (and when not to) call sound events. Because sound designers don’t know exactly how a player will interact with an environment, they have to set up behaviors within their sounds to account for various player responses and actions. This is done through the use of game data.

Game data and objects

In a game engine, game data can come in a variety of different forms. Many game engines associate datasets in the form of objects. These generic objects in a game engine can vary based on the engine that you’re working in, and can represent all sorts of different things based on context such as characters, vehicles, or scenery. Data on these game objects is then further manipulated through the use of sub-components and properties. Some examples of data that might exist on these objects are:

- Gameplay states (ex. idle vs. combat)

- Combat parameters (ex. enemy count)

- Player data (location, health)

- AI data (ex. nearby enemy states)

- Session data (ex. save state, game progression, etc.)

Sound designers can then create and utilize different data from these objects to control sound behavior.

Game object and component structures in the Unity game engine (from the Wwise Adventure Game)

Data extraction and manipulation can also vary based on the engine that you’re using. Unity and Unreal (two commercially available and widely-used game engines) both have different out-of-the-box features that will give you different possibilities. In Unreal, for instance, fundamental objects in the engine are referred to as actors, and their component behaviors are scripted using a visual scripting language called BluePrint (BluePrint functionality can also be written using the C++ programming language). You can learn about working in Unreal Engine 4 here.

The Unreal Engine 4 editor

Conversely, in Unity, these fundamental objects in the engine are referred to as game objects and their component behavior is scripted using C# (you can learn about working in Unity here).

The Unity editor interfacing with C# in Visual Studio

Sometimes, objects will be sound-specific, and sometimes sound will be placed on other objects that handle a variety of different behaviors simultaneously, such as a vehicle. Sound objects in a game engine can either emit sound into the game world, known as emitters, or listen for sound in the game world, known as listeners.

Listeners, by definition, ‘listen’ for all of the sound in the game world triggered by emitters. At any given moment, there could be hundreds of emitters in the game world. Conversely, in most cases only one listener at a time will exist in the game world. Based on the style of gameplay, a listener can be placed on the player, the camera, or somewhere else entirely. Both of these types of objects are making constant calculations based on game behavior. From an audio standpoint, along with all other gameplay tasks, these calculations are all done inside of the game engine.

There’s an exception to this, however. As you’ve probably started to gather, data calculations in game engines start to become incredibly complex. Additionally, sometimes built-in and user-friendly tools for audio are lacking in a game engine. To mitigate this, sound designers often integrate additional software into their toolkit known as audio middleware.

Using audio middleware

‘Audio middleware’ is a term used for software that sits between your game engine and your audio engine. Middleware controls the behavior of audio in your game and is used in conjunction with your game engine. By using middleware, designers can script highly complex audio and music behaviors in a user-friendly way, while leveraging data sent from the game engine. Once the middleware uses this data to manipulate sound, it sends audio data back to the game engine. In this way, audio middleware manages the behavior of both emitters as well as listeners and provides designers a vast array of technical tools to manage the behavior of sound.

Much like game engines, the specific tools vary based on the audio middleware you’re working in. FMOD and Wwise are two widely-used middleware options in the industry. FMOD provides a timeline-driven, DAW-like interface for editing sound. Instances of audio are then created through encapsulated events through which behavior is scripted (you can learn more about working with FMOD here).

The FMOD editor

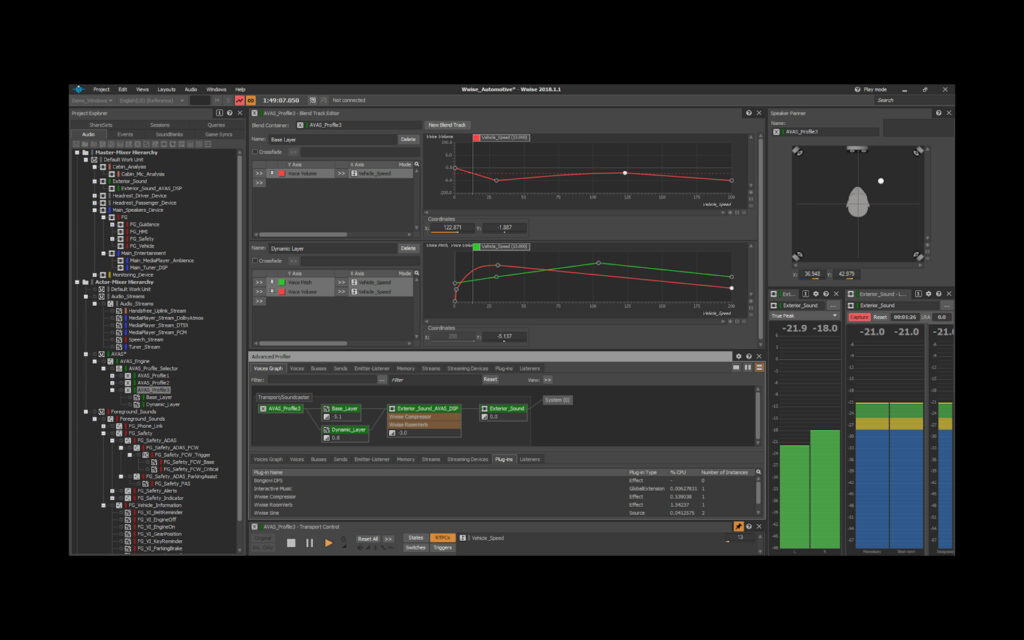

Wwise, on the other hand, uses an object-based, tree-driven routing structure to modify the behaviors of different sounds. These objects are then instantiated through the use of scripted event triggers, which control the different actions that occur within Wwise. Wwise has a series of certification tutorials located here that can help get you up and running with the middleware quickly.

The Wwise editor

Audio middleware provides a visual interface that sound designers can use to script sound behavior. In a game engine, this behavior would often need to be created bespoke for that toolset in code using that game engine’s programming language. This is the power of audio middleware in a sound designer’s workflow; the creation of complex sound behaviors is offloaded from programmers and completely enabled by sound designers. Additionally, because audio middleware interfaces directly with your game engine, functionality can be expanded to fit the possibilities of that game engine.

How game data can change a sound effect

As previously stated, a sound designer needs to create their sounds with game data in mind, and they need to ask themselves the question, “How will the sound behave if the player does ___?” As you can imagine, the amount of data points that can be considered for a single effect can quickly start to become overwhelming. Let’s break an example down to two points of data, distance and orientation.

2D sounds

In game audio, typical sound effects can be broken down into two broad categories: 2D sounds and 3D sounds. 2D sounds are simple; they act as regular sound effects. These are effects that are triggered in the world with no distance falloff or orientation. Therefore, when 2D sounds are placed on an emitter, their resulting sound effects are played equally through the front left and front right speakers. In this setup, a stereo file would sound exactly the same outside of the game engine as it does inside the game engine.

These types of sound effects are typically used to underscore UI elements. In this application, the user is usually navigating a menu of some sort, and these sounds provide focused feedback to their movements. Fundamentally, these can remain static as the focal point of the soundscape.

3D sounds

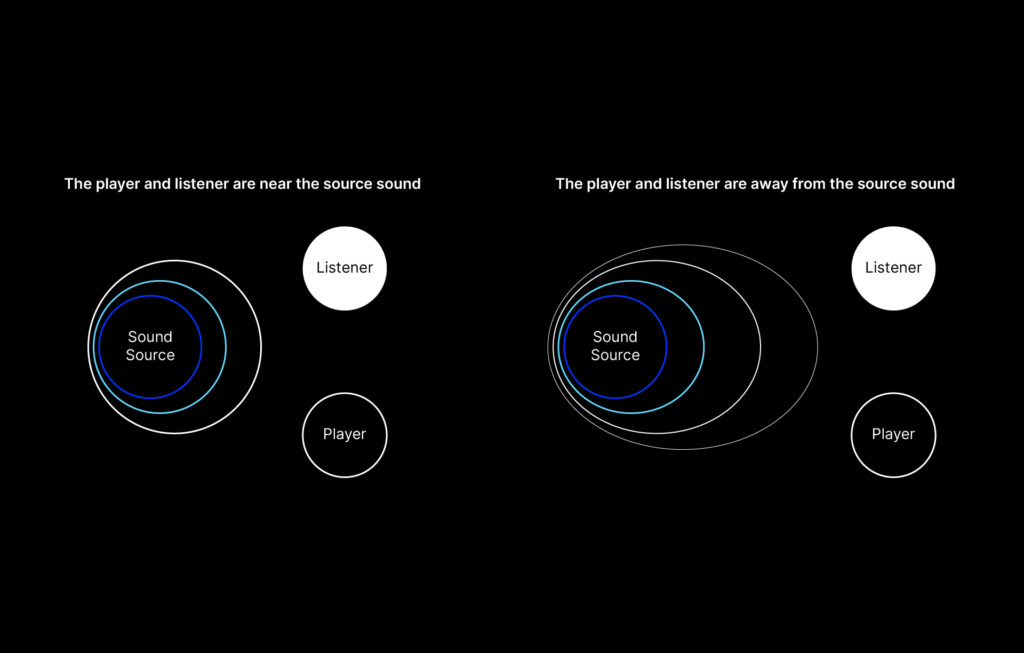

3D sounds are a bit more complicated. These are sounds which are placed virtually in the 3D world, and change based on different types of game data, notability distance and orientation. Let’s use an example of a single loudspeaker broadcasting announcements to a town square. This is an example of a very localized sound that would be emitting from a single point in space. Simply put, as the listener moves closer to the sound, the sound effect will increase in volume, and when the listener moves farther away from the sound, the sound will decrease in volume.

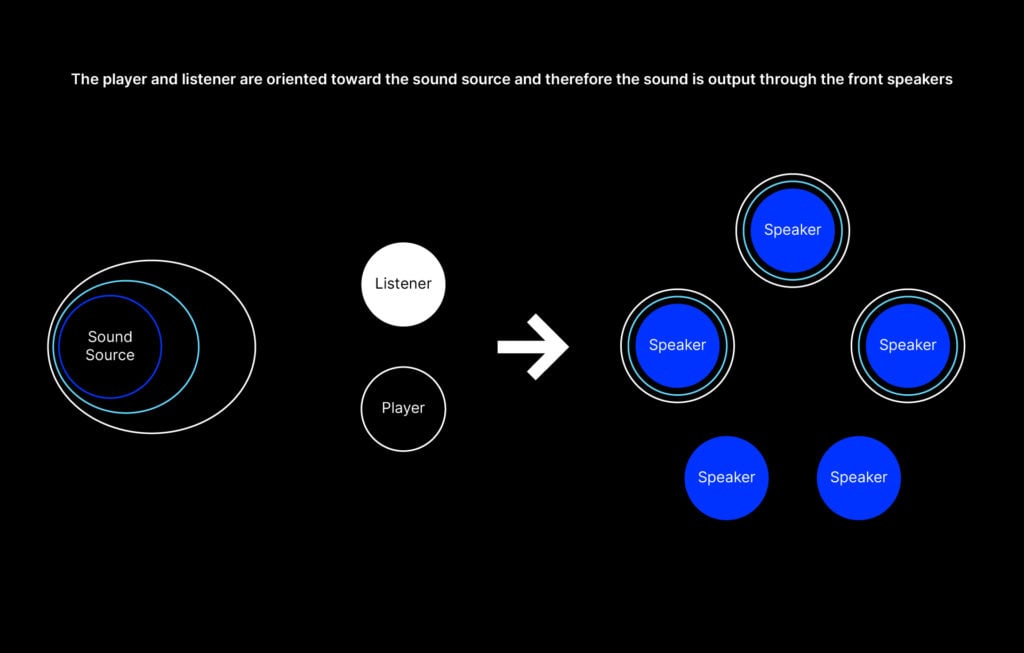

That covers distance at a basic level, but what about orientation? In a surround sound setup, the direction that a listener is oriented towards in relation to an emitter determines the speaker(s) used to output the sound. Therefore, if a listener is facing a sound directly, the sound will be played through a player’s front speakers. Likewise, if a listener is facing away from an emitter, the sound will be played through the player’s rear speakers. In this way, you can think of listener orientation as controlled panning automation, which you might be familiar with from working in a DAW.

When combining these elements, different possibilities start to reveal themselves. For starters, as the listener rotates in place near the sound source, the sound will be output through different combinations of speakers. Perhaps as the listener moves further away from the emitter, the sound is placed through a low pass filter to further highlight the emitter’s distance from the listener in space. Additionally, how might a sound’s reverberation be impacted by the surrounding environment? What happens if the sound is obstructed?

All of these propositions can be addressed by game data extracted from the game engine and translated to scripted behaviors within audio middleware. In the next article, we’ll discuss how to set up some behaviors and strategies for manipulating your game’s sounds in real time during gameplay.

Do you have any questions on any of the topics covered in this article? Let us know in the comments below.

Explore royalty-free sounds from leading artists, producers, and sound designers:

December 10, 2020

.svg)

.svg)