Illustration: Daniel Zender

When listening to a gunshot in a game or a film, very rarely are you hearing a raw recording of that sound.

Numerous layering and design techniques come into play to create the over-the-top weapon sounds we have come to familiarize ourselves with in visual media. Not only are recordings from different microphone types and setups layered with each other to create the design, but often recordings from one firearm are even layered with recordings from another to create a more distinct and unique sound. Additionally, firearm recordings can even be layered with sound effects (from source material that isn’t even sourced from the shot which was fired) to give the firearm its own sonic signature.

When it comes to video game audio, the breakdown of elements that make up a weapon is highly dependent on the type of game and that game’s sound world. For instance, a hunting simulator might handle weapons very differently than an action-driven first-person shooter. However, at its core, weapon audio design can be broken down into a few basic components that make up the sound: mechanics, low end, close detail, far detail, and tail.

Depending on gameplay context, sometimes elements of these layers will be combined or even changed altogether to fit a certain situation. For example, a World War 2 weapon might handle mechanics differently than a sci-fi weapon. Creating weapon sounds for a game requires careful considerations throughout the entire process, from obtaining source material to implementation.

Recording the source material

When recording source material for weapons, there are a variety of recording techniques and microphone positions used to capture the sound. Because there are so many different textures that can be derived from a single weapon, a multitude of microphone positions and techniques is required. This usually involves placing microphones at various positions and distances out in the field. The different options available to recordists are important to be aware of, even when sourcing sound from a sample library.

Capturing the mechanical characteristics of a gun, for instance, often requires a microphone to be mounted on the weapon. On the other hand, capturing a close perspective of the gunshot is usually done by using a stereo microphone setup (such as an ORTF, MS, or LCR-based setup) placed a few meters behind the shooter. A close perspective can also be captured with a setup in front of the shooter; however, this will ultimately change the character of the sound. If recording for a first-person shooter, a recording taken from behind the shooter will often give a more ‘realistic’ perspective when recreated in-game.

The strategy for capturing mid and far perspectives of the weapon can often vary based on the environment of a recording. These perspectives are usually taken with stereo setups (such as ORTF or XY) or even microphone setups with higher channel counts to gain a wide perspective of the space. In most cases, an open field is a great environment to capture firearms, as it’s free of reflections. However, depending on the situation, it’s sometimes desirable to capture the reflection points of an environment. This can be useful if the purpose of the capture is to obtain indoor sources or the sound emanating with nearby forestation. In this case, some microphones might want to be placed near key reflection points to capture the character of a location.

In any situation, it’s important to first listen to how sound travels through the recording environment before positioning microphones. Sporadic tree lines, hills, or even power lines could alter the reflection pathways of a space. It’s important to identify and avoid these problem areas to ensure that the recording contains no unexpected artifacts. As mentioned, this is also important to be aware of when obtaining sources from a sound library, in instances where capturing your own recordings isn’t an option. Different recording positions, environments, and microphone selections and setups will lead to different sonic results that can have an effect on the overall design of the weapon.

Combining the source material

In a game audio environment, weapon classifications can be broken down into two broad categories: player weapon fire and non-player weapon fire.

Player weapon fire sounds will encompass many of the layers that were mentioned previously. These are meant to immerse the player in the experience as much as possible. In a typical shooter, weapons take center stage. Therefore, player weapons will often have separate components to account for various aspects of the sound, such as the body of the weapon (also known as a ‘close’ layer), the mechanical details, the tail of the weapon, and even additional low frequency elements or sub frequencies to give the player’s weapon a greater feeling of power.

On the non-player side, the two most important elements of a firearm can be broken down into (1) close detail and (2) distant detail. This not only distinguishes non-player and player weapon sounds from each other in the soundscape, but also frees up additional frequency space during a firefight for the player’s own weapon to take focus. Additionally, the separation of components in a non-player weapon, though not as detailed, provides the player situational awareness on where the non-player weapon fire is coming from in a gameplay scenario (e.g. close by or far away). Additional volume and filtering can then be added using middleware to emphasize positioning.

Breaking down the components of a weapon sound

As previously mentioned, each one of these components can be broken down into individualized sound design elements with source material from a variety of recordings—you can find material to layer and manipulate for your own weapon sounds on Splice Sounds here.

Top start, let’s use this example of a fully-designed sniper rifle sound effect:

Now that we have an example of the fully-designed sound, let’s break down the various elements.

Body

The body of the gun sound acts as an anchor for the sonic characteristics of the fire sound. The different elements of the sound will be built around this layer.

Considering that this is a sniper rifle, to begin we’ll first use a close recording of a M24E1 sniper rifle:

This is a good starting point, but we’ll want to add some additional character to the sound. As we discussed, weapon designs will often combine recordings from multiple different types of firearms. Because a sniper sound is already being sourced here, additional details from a different class of weapons, such as an assault rifle, can create a unique blend.

Here’s the assault rifle in isolation:

This is a great start, but it could use a bit of snap to make the weapon feel more powerful. To do this, we can blend in some aggressive handling sounds to the body layer to occupy some of the high frequency range and add additional character to the weapon.

Here’s the handling sound in isolation:

…And here’s everything combined:

Mechanics

When a person is firing a weapon, the sound of the shot doesn’t exist in a vacuum; there are handling and mechanical noises that happen as the weapon is fired. These textures also need to be an aspect of the sound in a game. They not only bring realism to the player’s experience, but also add additional detail and transience to the weapon sound.

Below is an example of the mechanics of an M14 rifle in isolation:

…And now combined with close fire:

Tail

You may have noticed that there’s limited tail content (or reverberation) in the samples used so far. In game audio weapon sound design, tail content is sometimes isolated to account for a few factors. For one, the player could be in numerous different environments at any given moment. Isolating the tail content from the rest of the weapon sound allows us to swap in different options based on the player’s environment. For instance, a weapon reverberating through a rainforest would sound different than a weapon reverberating in a desert. Additionally, once implemented in middleware, tail content might be positioned to fire at a different time than the onset of the body of the sound (depending on the weapon).

Below is an example of tail content combining two different distant recordings of a sniper rifle:

Additional details

In some instances, a sound designer will add additional detail layers to help glue the elements of a weapon together or even engage the sub to add punch and power.

Below is an example of a ‘punch layer’ utilizing noise-based elements:

Below is an example of an LFE element that intentionally engages the sub when the weapon is fired:

Accounting for non-player fire

As mentioned previously, while player weapon fire often contains high levels of detail, the same does not necessarily need to be true for non-player fire. This is important from both a performance standpoint as well as a gameplay aesthetic standpoint. Less detailed non-player weapons conserve both RAM and CPU overhead and allow for more instances of the weapon to trigger simultaneously. From an aesthetic standpoint, there isn’t much of a reason for non-player weapon fire to occupy the sub, take up more frequency space than it needs to, or sound exactly like player weapon fire (unless a particular non-player weapon is very important to the current gameplay scenario).

In a typical scenario, the player needs to simply distinguish the distance from which the non-player fire is occurring from. Therefore, as previously mentioned, non-player weapon fire content can be broken down into just two categories: near and far.

Below is an example of close non-player content:

…And here is an example of distant non-player content:

Implementing the weapon sound using middleware

Now that the different component sounds have been designed, it’s time to export them from the DAW and implement them in middleware.

The separation of components allows the designer to do a couple of different things inside of middleware. The first is the addition of variation. If a designer were to export four different variations of ‘close,’ three different variations of ‘mechanics,’ four different variations of ‘tail,’ and two different variations of detail layers, each time the sound is played there will be countless different auditory combinations that keep the sound original. Combine this with randomized pitch and volume variation via middleware, and the sound will avoid causing ear fatigue to the listener, even after long sessions of gameplay.

Implementing the sound in middleware also allows a designer to avoid stacking transients. As you may have begun to gather, with so many transient-driven elements within a single sound, an overbearing amplitude peak can easily occur on the onset of the sound. Within middleware, slight millisecond delays can be utilized to keep this from occurring, as well as add depth to the sound.

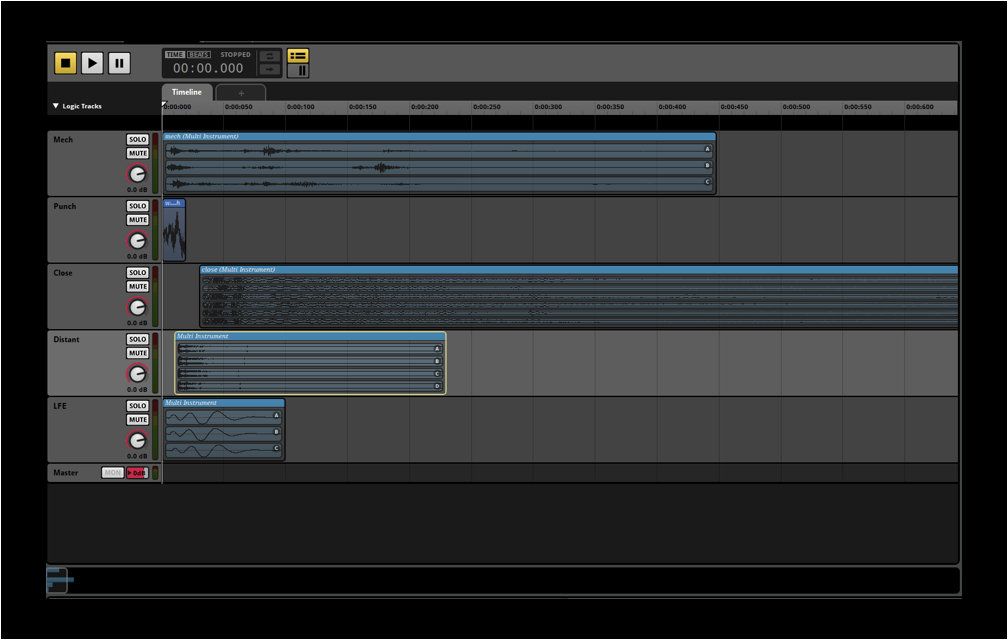

Below is an image of the different layers of player weapon sounds in FMOD, a popular middleware option.

This can be contrasted against non-player weapon sound implementation, seen below. Notice how there are significantly less layers at play here, saving on performance. This is especially relevant when there are several enemies firing their weapons at any given moment.

Additionally, design can further change in real time based on different aspects of gameplay. How will the sound of the weapon change as ammunition depletes? Should there be variation in sound when the player is aiming down versus hip firing? Are there other ways that the player can be notified of non-player weapon status that don’t require additional content? All of these considerations are very gameplay dependent and can be scripted in-engine and via middleware. These additions provide a level of detail to the experience that can vastly increase the level of immersion for an experience.

The bigger picture

In the context of a given gameplay scenario, there are numerous details to be accounted for when designing a firearm sound. These can even change based on a game’s genre; the weapon covered in this article is a generic sniper rifle, but many sound design elements would certainly change for a laser weapon, for example.

Additionally, the considerations of non-player fire in this example are accounting for a gameplay scenario of a large-scale firefight. If the game calls for a situation where ally or enemy awareness is hyper important, additional levels of detail for non-player weapons might be worth the performance tradeoff. Formulas and templates work as a great starting point, but ultimately, it’s always up to the sound designer to adapt the sonic characteristics of a weapon to gameplay.

What sorts of topics in game audio would you like to see us cover next? Let us know in the comments below.

Explore and download weapon sound elements and countless other cinematic effects:

June 3, 2021

.svg)

.svg)